Below we show case some preliminary work done by the German-Canadian DCI / EXPLOIT teams on leveraging text analysis tools to explore multilingualism and multiculturalism. DCI = Digital Commons Initiative is a project lead by Jan Christoph Meister, University of Hamburg and Stéfan Sinclair, McGill University, Montréal which is funded by a TRANSCOOP grant awarded by the Alexander von Humboldt Stiftung, with matching funds provided for by the SSHRC. EXPLOIT = Exploring Linguistic Diversity is a related joint project financed by an ALLC project grant; this project concentrates on the programming of multilingual analysis tools. For more information on DCI and EXPLOIT please contact Stéfan Sinclair (stefan.sinclair at mcgill.ca) or Chris Meister (jan-c-meister at uni-hamburg.de).

Some examples for exploring multilingualism and multiculturalism through text analysis tools

The following examples demonstrate how computer assisted linguistic analysis can be put to use for the study of qualitative research into, e.g., the culture specific formation of conceptual clusters.

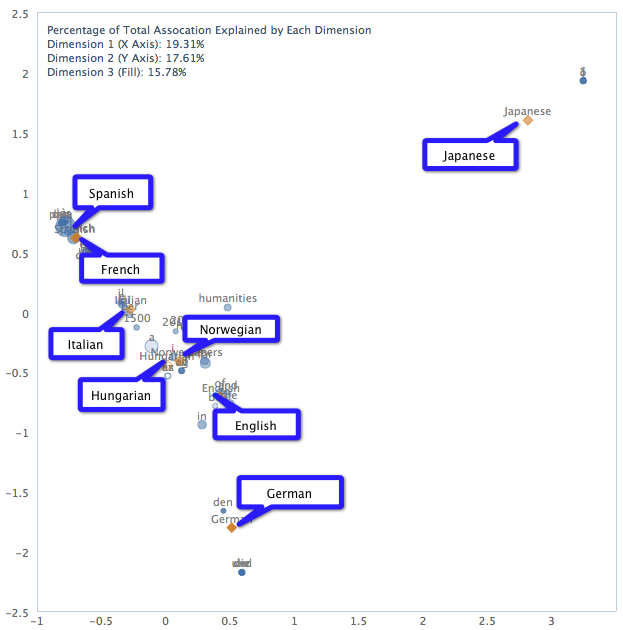

The Voyant correspondence analysis tool plots high frequency words around different documents in a corpus. This allows us to view which words are more “related” to certain documents and also to suggest which documents are related to one another.

By using the DH2012 Call for Papers in different languages we can see how languages cluster according to correspondence analysis.

We began by opening Voyant Tools with the Scatter skin (a skin that includes the correspondence analysis tool). We then pasted in the URLs from the different versions of the CFP:

http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-general.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-german.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-french.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-italian.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-spanish.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-norwegian.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-hungarian.pdf http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/10/cfp2012-japanese.pdf

That produces a result like the live tool below (note that we changed the document titles to better indicate the language – at the moment change document titles cannot be done through the Voyant interface):

Because of the bunching of values, the default view can be difficult to read. It is possible to zoom in on part of the visualization to see what’s happening, and to hover over data points to read the labels. Here is a clearer (static image) that labels languages:

This seems already fairly plausible: Spanish and Italian are very close neighbours, Japanese is a clear outlier, English is somehwere between the romance languages and German (which is pushed out close to being an outlier). We were intrigued by the proximity of Norwegian and Hungarian, especially since they actually cluster closer to the romance languages than English. One possible interpretation is that they use a lot of English words in the translation.

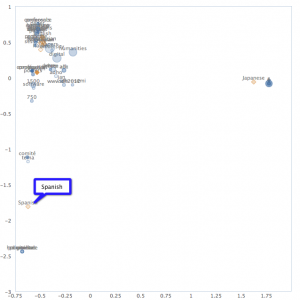

We thought it might be interesting to generate a similar graph but without function words for all languages (try to remove articles, prepositions, etc). Although Voyant allows us to load such a stopword list for individual languages, there does not currently exist a combined list for all of these languages, so we created one: http://www.dh2012.uni-hamburg.de/wp-content/uploads/2011/11/stop.all_.txt

We can now look at the interface with the stopword list included, which allows us to compare the two graphs:

These are difficult to read at smaller scale, but one can click on the images to see them in more detail. One thing that has happened is that Spanish is now an outlier (in the botttom left-hand corner), while almost all of the other languages except Japanese are bunching together. Again, this is not as surprising given that all CFPs share some English, including for terms like Digital Humanities Conference. The Spanish outlier is a bit of a mystery, but led us to realize that our quick-and-dirty stopword list compilation needed more work – some words like “y” were not included.

In the spirit of mini-experiments, we will leave this as-is for now, though it would undoubtedly be worth experimenting with better stopword lists for each language. In the meantime, experiment for yourself!